ORA ClientTalk

Reducing cognitive load in AI-assisted compliance decisions through semantic data visualization

Timeline

January 2025 - May 2025

Company

Mentivista (Client: ORA Space), an AI-powered LMS for beauty & wellness businesses. This project focused on the 'Shadowing Training' feature, enabling managers to review transcripts and coach sales representatives on compliance and empathy.

Role & Team

Founding product designer, collaborated with 1 PM, 1 AI Engineer, and 2 Software Engineers.

Responsibility & Collaboration

First design hire, led the end-to-end redesign and architected the semantic decision interface for AI-driven risk and empathy auditing in regulated workflows.

With PM, defined MVP strategy and scope by prioritizing the visualization of invisible FDA risks and soft-skill gaps to ensure regulatory compliance.

With AI/Software engineers, built a Semantic Design System, mapping latent model outputs into a deterministic visual hierarchy to translate opaque data into actionable heuristics.

Impact

Decision Validation Effort

↓ ~85%

backtracking

Reduced re-reading and manual verification by anchoring insights.

FDA Compliance Miss Rate

↓ Near 0

during monitored period

Lowered downstream risk from audits and regulatory remediation.

Operational / Coaching Velocity

↑ 3.5 x

Enabled managers to move from review to action significantly faster.

Hover screen to see old design ↓

Project Background

When AI outputs data, managers still make decisions.

The Challenge

Designing a decision system managers could trust in high-risk, regulated workflows.

Key Improvements

1

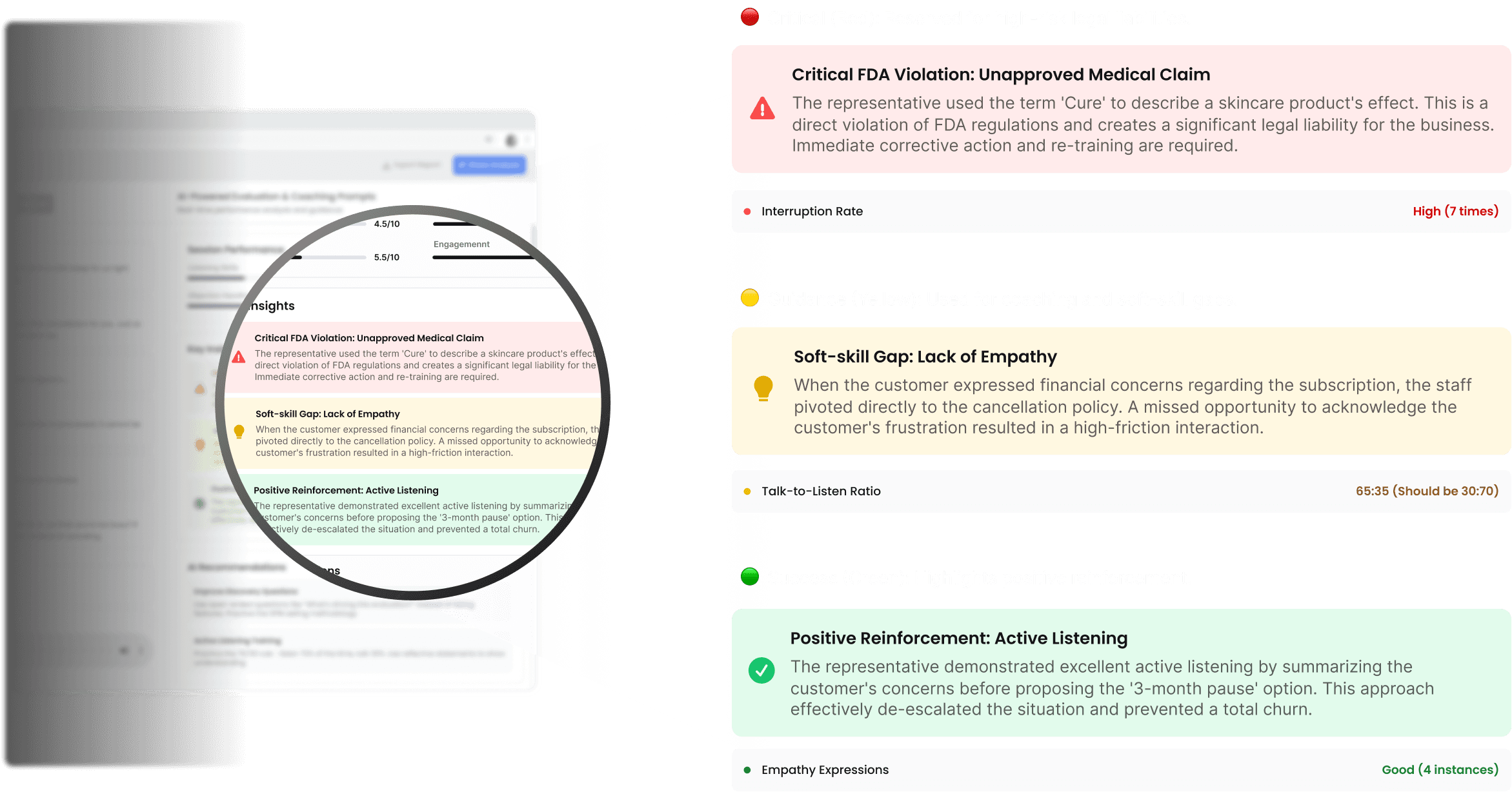

3-Layer Semantic System for Risk Prioritization

By introducing a strict 3-layer semantic token system,

we transformed flat AI output into a prioritized decision surface.

2

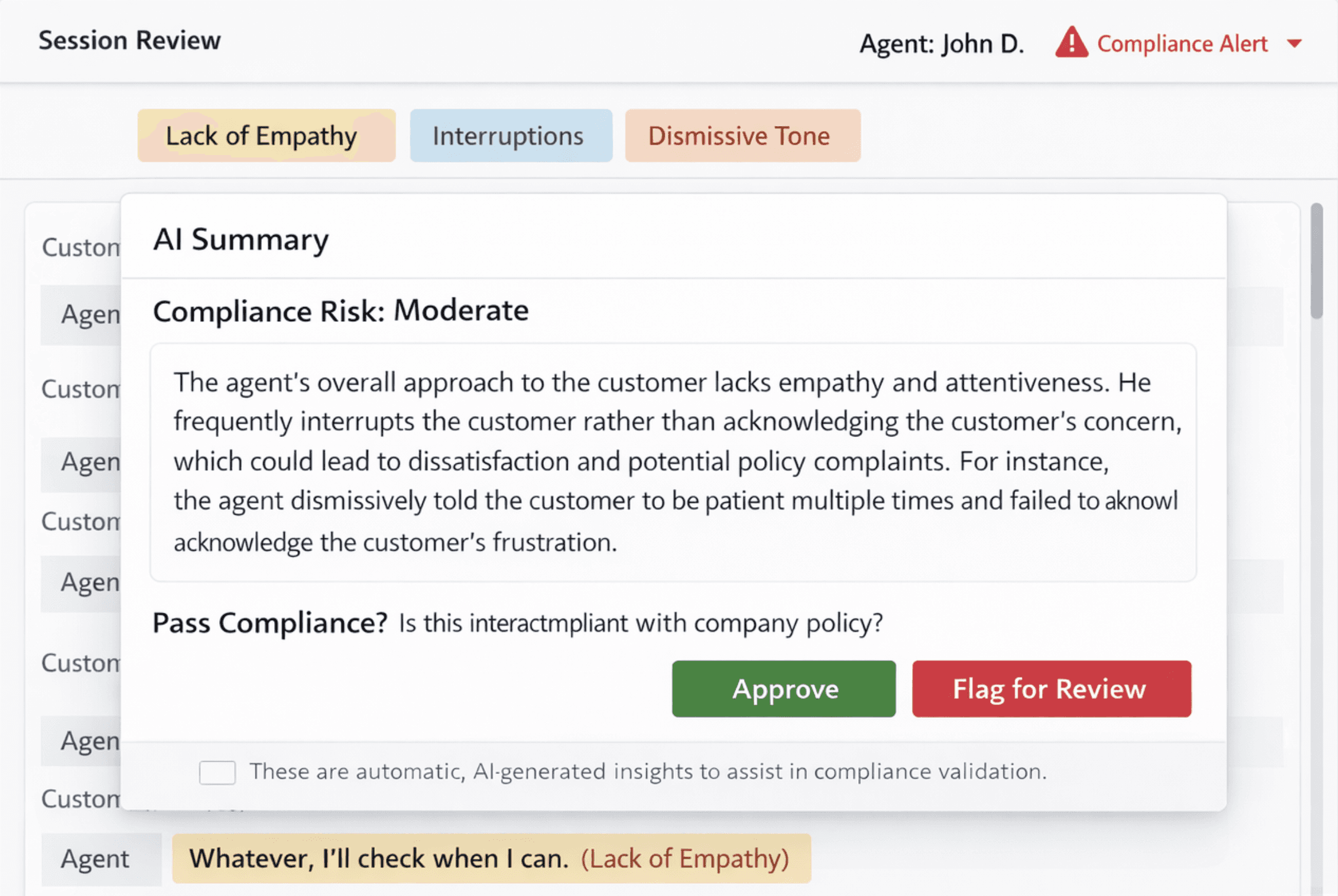

Context-Anchored Interaction for Evidence Validation

We physically connected each AI insight to its exact supporting evidence in the transcript.

User Pain Points

Managers struggle to distinguish critical FDA risks from minor soft-skill gaps due to flat, unhierarchical data.

"The scariest part is that a serious FDA compliance looks exactly like minor coaching feedback in the report. When I’m busy, I’m terrified I’ll skim past something that could actually get us in legal trouble."

Senior Med Spa Manager

“I spend more time trying to understand what actually matters than fixing the problem itself. I end up re-reading the whole transcript just to feel safe."

Regional Manager, Aesthetics Clinics

“There’s no hierarchy at all. Everything is flagged the same way. I still have to mentally rank what’s dangerous, what’s important, and what can wait."

Compliance Lead, Wellness Clinic Group

Business Goal

Maximize coaching velocity by restructuring AI output into a decision-first system that prioritizes legal risk over secondary feedback.

System Re-Architecture

I re-architected the system around a decision-first structure that explicitly encodes judgment, rationale, evidence, and decision context over time.

Design Exploration

Semantic Clarity — Traffic Light System

1) Trade-Offs

1st Decision

Highlighting everything the AI flagged

⚠️ Why It Failed

There were many highlights, but it was still unclear why the conversation was considered problematic.

Labels like “Lack of empathy” were abstract, with no explanation of what specifically made them empathetic or not.

2nd Decision

Letting the AI Explain Itself in a Paragraph

⚠️ Why It Failed

The summary still felt like another opaque AI output, with no reliable way to audit or challenge specific claims.

Final Decision

Structuring AI Feedback into Semantic Tokens

✅️ Why It Worked

Reduced cognitive load by converting abstract AI labels into structured, human-readable reasons that managers could evaluate without scanning the entire transcript.

Improved trust calibration by turning opaque AI judgments into explicit, debatable claims instead of unverifiable summaries.

Turned abstract AI feedback into an inspectable, debuggable decision surface.

2) Design Decision

Full-background colors created alarm fatigue when everything is emphasized, nothing stands out, so I pivoted to a minimalist token system.

❌ Rejected

✅ Selected

Appendix 1 - Access Governance , Controlled by Super Admin

Defining the logic of authority by mapping professional roles to licensed scopes.

Designed access management that filters coaching insights so managers only see what they are actually licensed to act on.

Appendix 2 - Traceable Dashboard

Extending trust beyond individual decisions by preserving authority, context, and accountability over time.

Scores are interpreted in the context of repeated managerial judgments, preventing single-period variance from distorting longitudinal performance evaluation.

Consistency of judgment, not point-in-time scores

Manager transitions are explicitly preserved to ensure historical judgments remain attributable and interpretable across organizational changes.

Traceable authority and accountability per decision

Design System

Design System for Reliable, Actionable Signals

Semantic Tokens define urgency, risk class, and decision weight

Component States preserve rationale and evidence linkage.

Accessibility Semantics ensure urgency is perceivable across modalities.

Retrospective & Conclusion

This project reinforced that in AI-native systems, trust is not earned through model accuracy alone, but through semantic structure. By encoding urgency, evidence, and accountability into a 3-layer token system, I transformed opaque AI output into a decision surface managers could safely act on—without slowing down high-risk workflows.